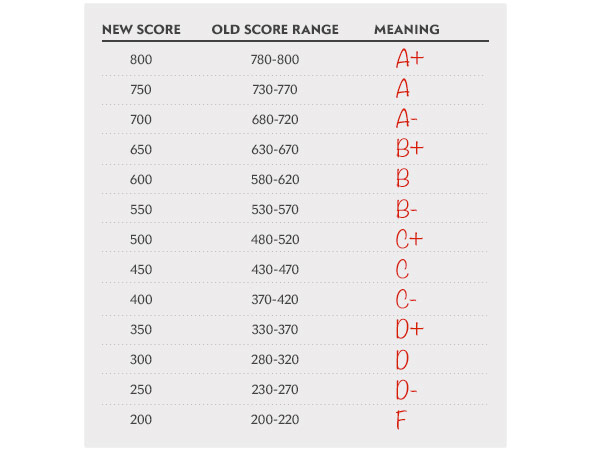

Two weeks back, I wrote a piece at Slate (edited by the wonderful Laura Helmuth) arguing that the SAT should stop giving different scores to virtually identical performances. In particular, I advocate a switch from increments of ten (500, 510, 520…) to increments of fifty (500, 550, 650…).

The basic argument is simple: Reporting “510” and “520” as distinct scores suggests that they’re meaningfully different. They aren’t. When you retake the SAT, your score typically fluctuates 20-30 points per section on the basis of randomness alone. A 510 and a 520 are, for all practical purposes, indistinguishable.

I’ve seen lots of thoughtful counterarguments to my piece—some strong, some weak, some dripping with that mucus-like film of nastiness that coats internet comment sections.

Now—to reply!

This is the most common objection. But it doesn’t hold up to scrutiny.

First, we all know instinctively that adjacent scores on a test represent similar performances. If the SAT changed to 50-point increments, then 600 vs. 650 would no longer read as, “Whoa, a huge five-increment gap!” It would read as, “Oh, a small but probably meaningful one-increment gap.” Which is exactly right.

Second, this argument doesn’t address why no similar test offers such hairsplitting distinctions. Not the ACT. Not the AP. Not NCLB-mandated state tests. Not the MCAT, GRE, or even the LSAT. Not academic classes. The SAT’s level of detail in score reporting is unmatched, even among tests with very similar (or identical!) purposes. Literally everybody else makes coarser distinctions. What makes the SAT different?

This argument overlooks that the data we’re throwing out is more noise than signal.

I resort once again to my analogy of an unreliable scale. Suppose that colleges want not the smartest, most academically accomplished people they can get, but simply the heaviest. (It’s football recruiting season, I guess.)

I step on a scale three times, and get weights of 158, 162, and 157. Then you step on the same scale twice, and get weights of 159 and 158.

Let’s say colleges consider only your heaviest weight (as they do for SAT scores). So I’m a 162 and you’re a 159. Does this mean I’m heavier? On the basis of this data, should colleges favor me—even slightly—over you?

I say no. We’re roughly the same weight, and the scale isn’t precise enough to tell the difference. The fact is, we’re both roughly 160, and whoever’s reporting the scale’s measurements shouldn’t claim a level of precision that the scale doesn’t actually offer.

For what it’s worth, I’d support the SAT reporting detailed performance breakdowns to the students themselves. That’d be helpful feedback. But it’d be silly to give such volatile, noisy data to colleges.

Yes, some do—including, I suspect (or hope), most college admissions officers. But even savvy admissions departments have an incentive to boost their school’s perceived selectivity by taking students with higher scores. An extra 10 or 20 points makes little difference for a student; but it can make a big difference for a college’s rankings.

In addition, lots of people don’t realize how volatile SAT scores are.

Tutoring services promise “increases” in SAT scores, knowing full well what the parents are missing: that a small boost is likely to occur on the basis of sheer randomness. Meanwhile, kids with scores ending in “90” are far more likely to retake the test, hoping the next session will put them “over the hump.”

Now we’re getting to the heart of the issue. The strongest version of this argument goes something like this:

For an individual student, there may be little difference between a 710 and a 720. But in the aggregate, a large group of kids with 720 should outperform those who scored 710. This distinction is thus useful for colleges, who are (after all) dealing with large applicant pools and need a way to make distinctions.

I’m arguing schools shouldn’t factor small distinctions in SAT scores into their admissions decisions. In that case, what should they use?

At most colleges, the answer is simple: transcripts. GPA is hands-down the best predictor of college success, especially once you consider the rigor of a student’s coursework.

As for Ivies and their hyper-competitive ilk, they already put limited stock in SAT scores. At Yale, for example, a student with 97th-percentile SAT scores (that is to say, a 2120) has no better chance at admission than a student at the 89th percentile (that is, a 1920. (Note: Admission data is from 2005, percentile data from 2006.)

If even hyper-selective schools (who presumably pour the most effort into admissions) don’t care about small differences in SAT scores, why should anyone?

The trickiest case is big, highly competitive state schools like UC Berkeley and the University of Michigan. They receive tens of thousands of strong applications per year, and necessarily rely on a semi-automated, algorithmic process to make decisions.

Here, more than anywhere else, fine-grained SAT scores might help.

This is where we exceed the limits of my expertise, so I turn to the professionals. One researcher finds that your school’s average SAT score (which correlates highly with privilege) is actually a better predictor of your college performance than your individual SAT score. Other researchers reach similar conclusions. Though I can’t rule out the possibility, I’m not convinced that a 10-point swing in SAT scores should play any role in the admissions process at any school.

My plan/hope is to interview some college admissions officers, dig deeper into the research, and revisit this issue again. Stay tuned!

Per the Slate comments, the LSAT actually does offer 61 different scores, which weakens your rhetorical point.

What I didn’t see in the Slate comments was that your comparison between SAT scores and class grades is false, because they measure fundamentally different things. Class grades reflect what a student has done with perfect accuracy (at least in the matter of test-taking: actual learning is another story), and the more precise, the better: a 90 student really is doing better on average than an 89 student, and nothing is gained by throwing that point away except to soothe the butt-hurt of the innumerate. SAT scores are predictive: they reflect what a student is expected to do, and shouldn’t be more precise than they are accurate.

LSAT gives 61 different scores for the entire test; SAT gives 61 per section, for a total of 181 over a morning of testing.

A level maths/FM (or rather, A level maths/FM as Cambridge want your grades reported) is an exception: they take percentile(ish, because UMS weirdness) scores on each of 12 modules, for an utterly ludicrous number of combinations. If you want single-test arrangements, go for the linear GCSE: that’s got (roughly, because again, UMS weirdness) 600 different possible results. The difference is that they also provide a rough guideline letter grade (with seven and nine grades respectively): those institutions that don’t care about the fine-grained detail (read: most of them) just ask for the letter grades, and those that do ask for it.

You make a number of statements and provide arguments that lack quantitative rigor, e.g., “we all know instinctually…”; “When you retake the SAT, your score typically fluctuates 20-30 points per section on the basis of randomness alone. A 510 and a 520 are, for all practical purposes, indistinguishable.” Where is the data on variance with repeated testing? Is it skewed i.e. are repeat test takers equally likely to do better or worse? You are trying to make a quantitative conclusion about granularity, but you really haven’t proven that the dynamic range of scoring is statistically inappropriate.

I’m getting those numbers from this document, which I linked to in my original piece:

Click to access Test-Characteristics-of-SAT-2013.pdf

As for the 510 and 520 being “indistinguishable,” it’s certainly a qualitative judgment, but I think most would agree that a gap only 1/3 as large as the standard error is not, in most settings, significant.

I totally agree. I’m off to college this coming fall, and during my preparation, I chose to forego the SAT in favor of the ACT, almost solely because the SAT’s grading system was a bit intimidating. It seemed like every ten points was vital to my admission to college, and it also seemed far too easy to lose ten points. The ACT, however, with its 36 point scale, provides an accurate evaluation of your knowledge (unlike the AP, which only has a 5 point scale, which is much simpler, but lacks adequate data) while decreasing the natural fluctuations in one’s score inherent in the SAT. That being said, I think having 20 point increments would more adequately reduce these fluctuations while simultaneously providing adequate data about candidates. Having 20 point increments would essentially make it a 40 point scale, putting it on the same level as the ACT.

Thanks for the comment. I actually tend to think the ACT is a bit too fine-grained in its scoring, too, but it’s certainly better than the SAT.

Bravo on the line regarding GPA. Consistent studies have shown that the best predictor of achievement and degree completetion in college IS grade point average from high school, NOT SAT scores. SAT scores can show knowledge attained (and likely memorized well) and that’s about it. It does nothing to predict how hard a student works, how dedicated they are to their passions, their level of responsibility, and the myriad of other things a GPA will represent. In fact, as an educator in a low income school, I know that SAT is STILL biased towards those who don’t experience poverty, so if the SAT scores are the deciding factor in admissions, when students have been working since middle school to achieve their way OUT of poverty, they’re screwed from birth.

The problem with arguing high school GPA is better at predicting college performance than SAT scores is it ignores one important aspect of a selection process: incremental validity. The reason selection boards use multiple measure to select students is that each measure provides a ‘slice’ of the unknown variance in performance/ability. High school GPA is very predictive of freshman GPA…BUT it only accounts for a portion of the total variance. In order to more completely assess a candidate, we need to use other measures that provide incremental predictive validity above and beyond high school GPA. Admissions tests do just that.

Personal note: I was well below the poverty line when I took the SAT. I agree that the tests is biased against low SES individuals…but so are all of our best predictors.

Very true about the additional predictive power gained from considering SAT/ACT scores; if that weren’t the case, it’d be a no-brainer to abolish such tests completely.

The deep problem with the correlation between SES and predictors of college performance is that those predictors aren’t necessarily “wrong”; they could be accurately reflecting the inequities of our society and the way those play out in higher education success rates. Addressing the problem means sifting out the diverse (and sometimes conflicting) goals of university admissions – not just taking the students most likely to succeed, but those who in some sense will “benefit” most from the education.

Dang it – completion!

What you are essentially advocating is creating larger bands…bands of ‘indifference’ in which we treat scores as the same (b/c of various uncontrollable factors such as measurement error). The problem with making bands wider is that is lowers the predictive validity of SAT scores on college performance (e.g. the correlation between SAT scores and college freshman GPA). This is generally frowned upon b/c those making selection decisions should opt to use test scores in the most valid way possible: Top-down selection.

The method you are advocating is referred to as ‘banding’ in the I/O and testing education literature. It is a fiercely debated topic because the larger the bands, the greater the loss in predictive validity (the standard by which we conclude a measure is ‘good’). Many (such as Cascio 1991) advocate establishing bands using the Standard Error of Measurement…but the literature has shown that these procedures can lead to a very large loss in predictive validity.

Here is a good article: http://www.krannert.purdue.edu/faculty/campionm/Controversy_Score_Banding.pdf

The problem with so-called “predictive validity” is that it is not validity at all, but reliability in disguise. We determine how valid measure A is by comparing it with “gold standard” measure B, which is assumed to be 100% valid. But the purpose of college is not to produce students with high freshman GPAs.

Thanks for the comment and the link. While there are some breaks in the implicit analogy between admitting college students and hiring workers (including the distinction between a company’s goal of earning money, versus a university’s more diverse social goals as a nonprofit), a lot of the critique of banding seems to apply to the SAT setting.

It’s worth pointing out that the SAT is already banding (by using increments of 10). Some of the arguments in the paper point towards “the less banding, the better,” though as John points out, it’s easy to overestimate the actual validity of SAT scores (which, compared to the numbers cited in the Banding paper, already have lower validity).

No. Thank you! I thoroughly enjoy reading your blog Ben! It is very thought provoking.

I decided to comment on this particular post because it is a topic debated in I/O Psychology. Most psychometricians/ statisticians would agree that bands that are too wide are bad for validity.

There is a precedent for using bands to increase ‘fairness’ in a selection procedure. For example, in selection for employment decisions, banding is often used to lower disparate impact against minority applicants. Bands allow the consideration of predictors other than the test score…which is a good thing.

There is one more point I’d like to make though. While I do agree with the practice of very limited banding…I don’t think high school GPA is a good measure to use within the band to make decisions. Most certainly it is not to be used in lieu of SAT scores. High school GPA is an unstandardized point estimate of a student’s performance. GPA scores are difficult to compare across schools, school districts, and especially states. The standards for earning a 4.0 in one school may be completely different from the requirements of a school in another district. In fact, GPA may not even be on a 4-point scaling! What do you do then? The process of equating GPAs across applicants is time consuming. Standardized test scores are widely used for selection because they are standardized…it makes it very easy to compare scores.

I like that you point out in another comment that banding cutoffs are subjective judgments (even if we use a statistical criterion such as SEM to help out). I agree with that. And there will always be a debate as to how wide the bands should be. But I am skeptical of the suggestion that GPA should be weighted more heavily in selection decisions.

What about the GMAT?

Looks like the GMAT falls somewhere between the ACT and SAT. According to Wikipedia, each section has the following numbers of possible scores:

Quantitative: 41

Verbal: 41

Integrated reasoning: 8

Analytical writing: 13

It then says “total score 200 to 800,” but I don’t know what intervals they use for the total score.

In any case, that’s still fewer distinctions than the SAT uses, though more than most other tests.

Question you may be able to answer: How does the SAT system compare to the English IGCSE/A-Levels? And will those be any use at all for entry to an American college?

My understanding is that A-Levels are more similar to AP Exams in the US (in that they’re done as a culminating exam for an academic course in a specific subject). The SAT is intended more as a test of general skills, rather than of specific content. But I’m really not too knowledgeable about this, and I have no idea how American colleges look at A-levels.

Okay, thanks.

In general I think this is great, but I’m going to nitpick, sorry:

The correlation with SAT and socioeconomic status is in the low .30s. I agree it’s significant but I wouldn’t describe that as “highly correlated”…

Hmm. What’s the correlation with college GPA?