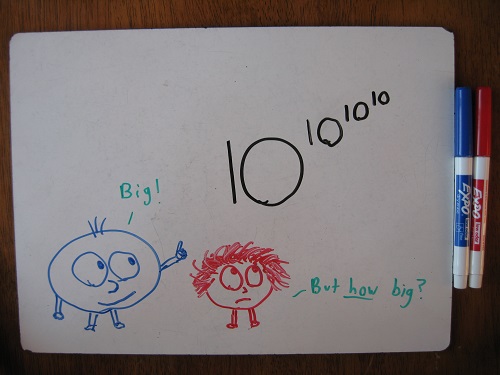

Student: What’s the biggest number you know?

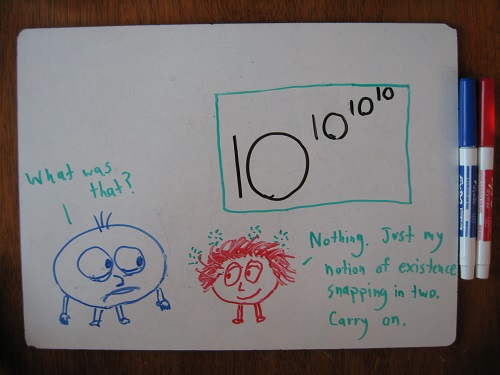

Me: Well, here’s a pretty big one. 10^10^10^10.

Student: What’s that, like a billion?

Me: Not quite. See 10^10? That’s ten billion. It’s about the number of people in the world, plus 3 bonus Indias.

Student: All right! Bonus Indias!

Me: Okay, so you can picture 10^10?

Student: Well, not really, but I know what it means. Keep going.

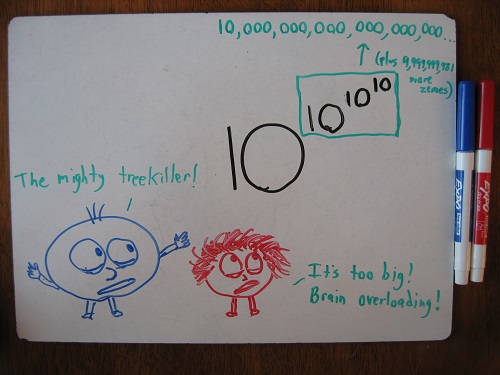

Me: Okay. Now 10^10^10 is a 1 followed by 10 billion zeroes. Like, turn all those people into zeroes, line them up behind a numeral 1. That’s 10^10^10.

Student: Whoa.

Me: Just to write it out, you’d need about 3 million sheets of paper. That’s a 9 foot x 9 foot x 12 foot stack.

Student: But the poor trees!

Me: I know. Let’s call this number the tree-killer.

Student: Yeah. Stupid tree-killer!

Me: So picture the tree-killer. It’s a massive number. Absurd. Grotesque. Beyond imagination.

Student: Right.

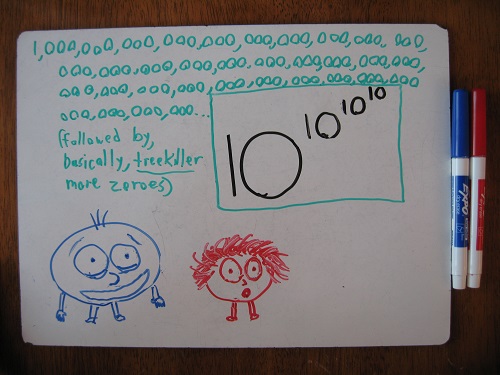

Me: Now, 10^10^10^10 is a 1 followed by a tree-killer of zeroes.

Student: Say what now?

Me: We just discussed how treekiller is the craziest, hugest, most unfathomable thing you’ve ever tried to fathom, right?

Student: Right.

Me: Well, that unfathomable monstrosity of a number… that’s how many digits are in my number.

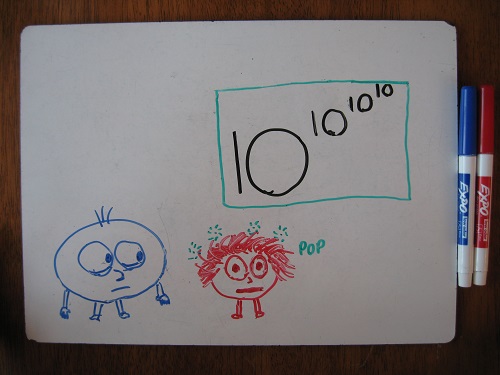

Conversation like this remind me of Graham’s number. Well played.

One of my professors once told me that the log log of basically any number in the universe is effectively less than five.

It’s great for hammering in just how big repeated exponentiation can get, and how much cooler math is than physics. *They* have to stop at a certain level of incomprehensibility, we can just keep going.

You’re missing a set of three more zeros in Picture 2 I believe!

Mliha Ben. I like it, but watch those drawings they are getting better.

Perhaps you’ll have to change the blog name to Math with Okay Drawings

themathmaster: Had to go look up Graham’s number. My head started swimming.

Nate: Well said. And good point about log log.

My dad told me once about some function that comes up when you’re designing algorithms. It grows slower than any chain of log(log(log(…)))’s, so you can really think of it as constant. But since it TECHNICALLY grows without bound, if you have this as a term in your algorithm, it doesn’t count as a constant (which matters for measuring the speed of algorithms).

e: It wouldn’t be a Math with Bad Drawings post without a boneheaded mistake in the drawings, would it? 😉

Farid: Mliha! Dooga dooga on the drawings.

Mr. Dardy: That’s a very kind sentiment. Luckily (or unluckily), I don’t think we’re quite there yet!

You probably mean log*, or iterated logarithm.

lg* 1 = 0

lg* 2¹ = 1

lg* 2² = 2

lg* 2⁴ = 3

lg* 2¹⁶ = 4

lg* 2⁶⁵⁵³⁶ = 5

…

Actually, log* n grows even faster than another obscure function, α(n). But α(n) happens to be the amortized running time of some specific algorithm for disjoint sets, and in fact, there is no way to do it faster than α(n), even though “α(n) is less than 5 for all remotely practical values of n”!

(Source: https://en.wikipedia.org/wiki/Disjoint-set_data_structure)

informative read

http://kisumuarts.uonbi.ac.ke/