a dispatch from the fourth annual Heidelberg Laureate Forum

Early in his talk, computer scientist John Hopcroft noted a funny fact about clustering algorithms: they work better on synthetic data than real data. But this is more than an odd tidbit about software.

It’s an insight into the nature of our world.

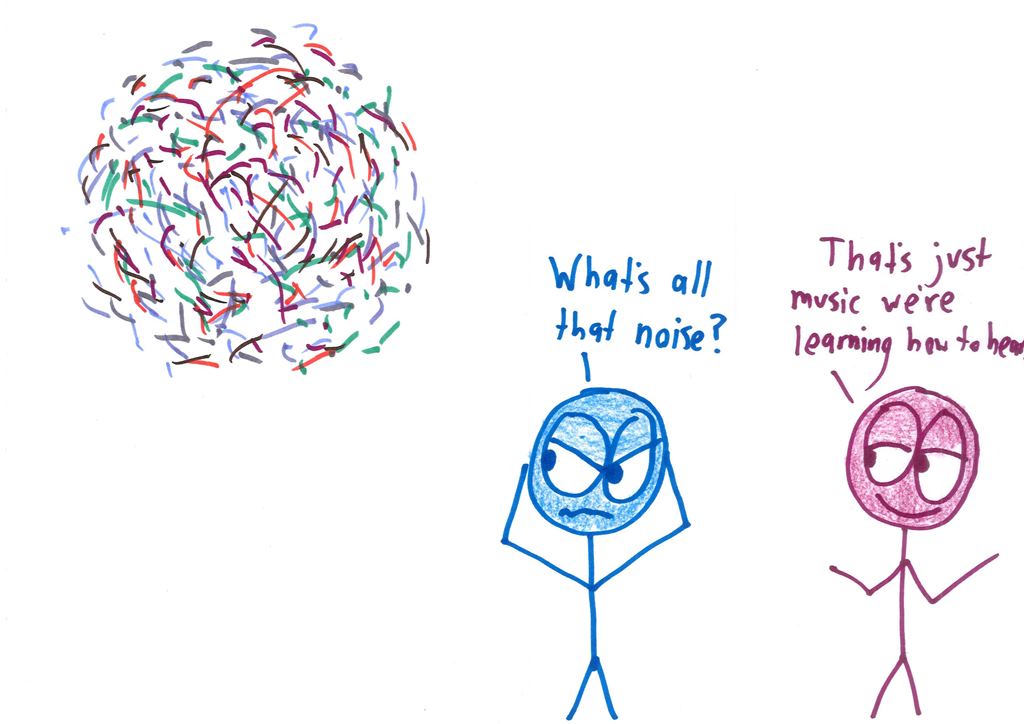

When we invent our own synthetic data, we try to mimic real data by mixing true information with random distraction–combining “signal” with “noise.” But in real data, the divide isn’t so clear. What often looks like noise turns out to be the deep structure we haven’t grasped yet.

The noise is just signals you can’t yet hear.

Hopcroft’s insight: data doesn’t just have one structure. It has many. If I scanned notebooks from a hundred people, and made a database of all the individual letters, I could sort them lots of ways. Alphabetically. Capital/lowercase. Size. Darkness. Handwriting. Each of these is a different layer of structure.

And to understand data–and the world–you’ve got to reckon with all those layers.

Here’s the approach Hopcroft outlined. First, cluster your data according to its primary, surface-level structure. Then, wipe the slate—scramble this structure—and run your algorithm again, to find the hidden structure.

Uncover the signal you thought was noise.

For example, Hopcroft and his colleagues ran their algorithm on Facebook data from Rice University. They had sparse information: no names, no profiles, just who was friends with whom—a skeleton network of connections. Based on this, their algorithm quickly sorted the students into nine clusters.

But was it merely random, algorithmic gibberish? After all, the computer knew nothing about the students, other than the friendship connections. Did the nine clusters have any actual meaning?

Sure. They corresponded precisely to the nine student dorms.

Then they weakened that structure, and ran the algorithm again. This time it produced only four clusters. The computer, of course, had no idea what these clusters meant—it only knew that friendships at Rice reflected a hidden four-group structure. Those groups?

Freshman, sophomore, junior, and senior.

The most tantalizing fact: there were two more layers of structure revealed by the algorithm, but it remains a mystery what they are. In the lives of those undergraduates, among everything we call “noise,” there are still hidden signals.

You can see the entire talk here.

That’s amazing 🙂

How do you weaken the structure of data?

My guess would be to multiply the “value” of each friendship connection within a group by a value less than one. So each friendship within each dorm would be worth a fraction of what it was before when determining the next level cluster.

So each dorm friendship is worth less in relation to the other, non-dorm friendships? Which then makes the other friendships more “valuable” (data wise), and show up more obviously when plotted…is that right?

Yep, that’s what I’m thinking! But I don’t actually know what they did. 🙂

nice write up.

relating noise to unprocessed data

Very informative , well written and clearly explained Article.

John Hopcroft’s book The design and analysis of computer algorithms

is the best book i have come across so far to study algorithms

inorder to create the basis of cluster analysis and clustering algorithms

Thank You so much for sharing You knowledge on Hopcroft’s insight

Great article ^_^

penzu