Man—I had a whole, scathing essay written and ready to go.

The title: The SAT Changed Their Guessing Policy to Appear Fairer, But It’s Actually Less Fair. “With the ACT pulling ahead in the admissions test Cola Wars,” I wrote, “I struggle to greet the SAT’s announced changes with anything but cynicism.”

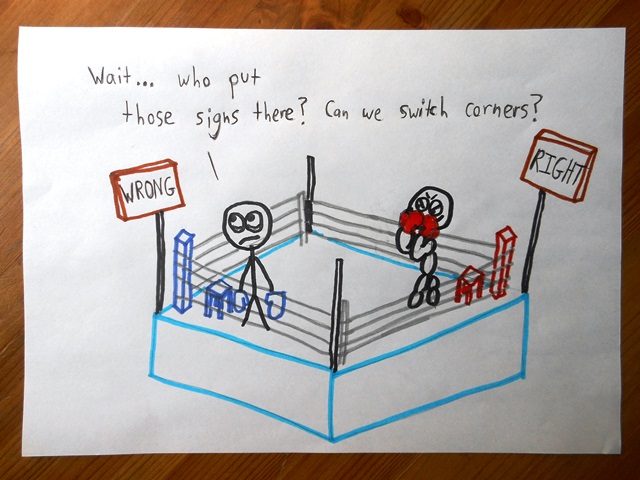

I was halfway into the boxing ring when I realized I was on the wrong side of the fight.

This little fable is about the SAT’s “guessing penalty,” and while it’s a tale full of technicalities, I promise it’ll end with a moral. A moral so obvious, it’s surprising.

Or perhaps vice versa: so surprising, it’s obvious.

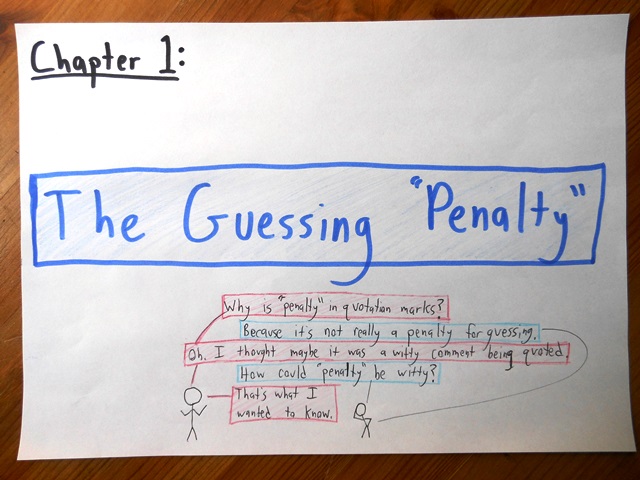

Although the term “guessing penalty” appears practically everywhere (including the New York Times), the College Board never uses it. And the College Board is right. There’s no way to penalize guessing, per se—after all, the SAT only sees right answers and wrong ones. They’ve got no way of knowing whether you arrived at your response by cold, logical deduction or by blind, stupid luck.

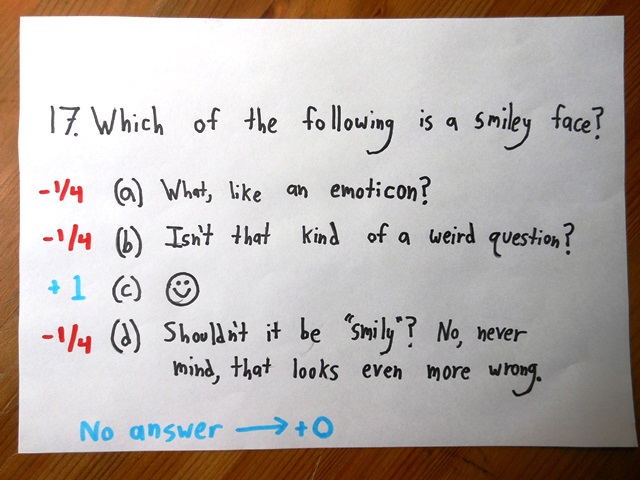

Instead, the current SAT penalizes wrong answers. You get 1 raw point for answering a question right, and lose ¼ of a point for answering it wrong. (A blank answer neither adds nor subtracts from your total.)

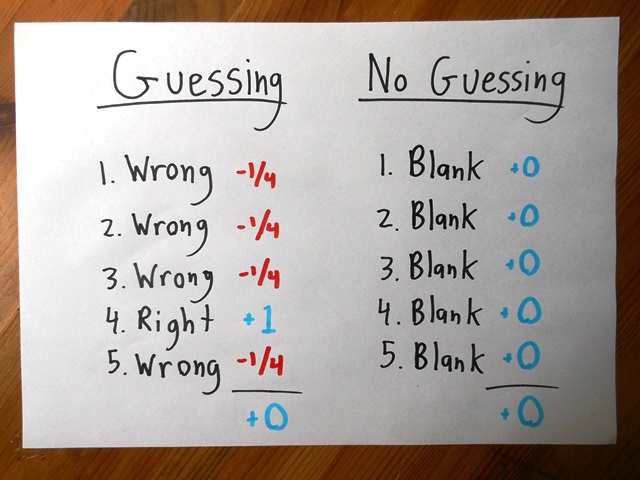

This system doesn’t aim to penalize guessing. It aims to neutralize it.

Let’s say you guess blindly on five questions. On average, you’ll get one right (+1) and the other four wrong (-¼ – ¼ – ¼ – ¼), for a net score of zero. Since the outcome is no different than if you’d simply left all five questions blank, guessing should neither help nor hurt you overall. It just adds a greater element of randomness to your score.

Why did the College Board ditch this longstanding system? For simplicity’s sake.

In their announcement, they described the old system as “Complex Scoring.” That’s fair. For their whole lives, students have taken tests with no distinction between blank answers and wrong answers—both are worth zero. Then, on the SAT, when the stakes are the highest, the rules of the game suddenly switch, and blank becomes (slightly) better than wrong.

This unfamiliar system might seem to confer an advantage on wealthy families. After all, they can afford tutors to explain the rule and its implications, while poorer students remain in the dark.

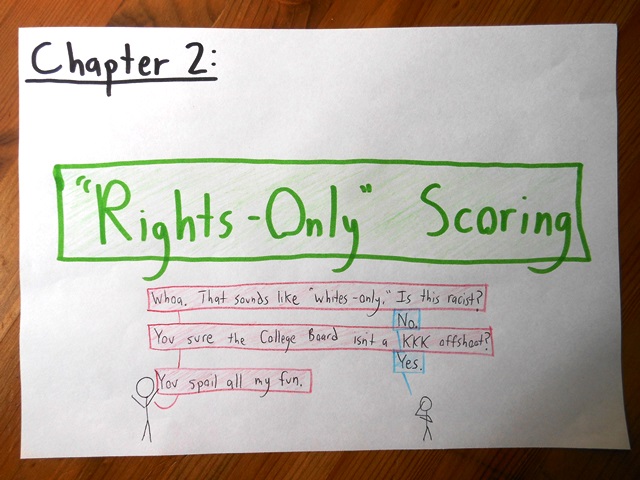

Hence, the new and cleaner system: One point for right answers. Period.

Unfortunately, this revision doesn’t make the test less gameable. It makes the test appear less gameable, while actually making it more so.

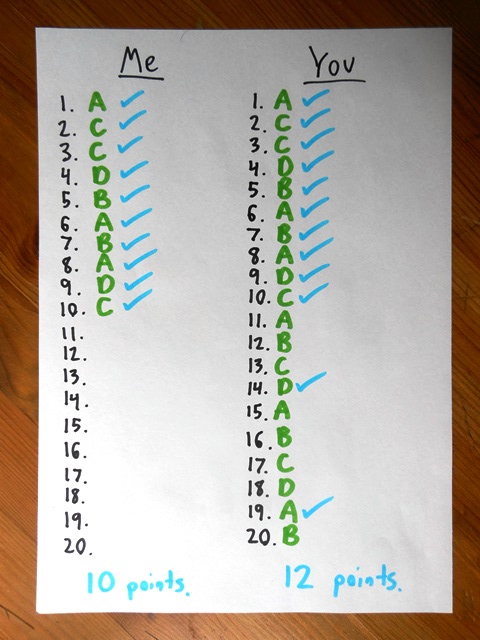

To see why, suppose that you and I both take a timed section with 20 questions. Being similarly skilled (and rather slow) students, we each get through question #10, finishing it with a few seconds to spare.

But then, I spend those final few moments working (naturally, it would seem) on problem #11. You, on the other hand, spend the remaining time randomly bubbling answers for #11-20.

Clever move on your part. Under the old rule, this would’ve merely added randomness to your score. But under the new rule, you’ll get credit for your right guesses and suffer no cost for your wrong ones. On average, it’ll boost your score by 2 points—a 20% improvement over mine.

You and I knew precisely the same number of actual answers, but you did significantly better, because you employed the right test-taking strategy.

Isn’t that precisely the scenario the new SAT is supposed to help avoid: rewarding students not for their knowledge of the content, but of the test?

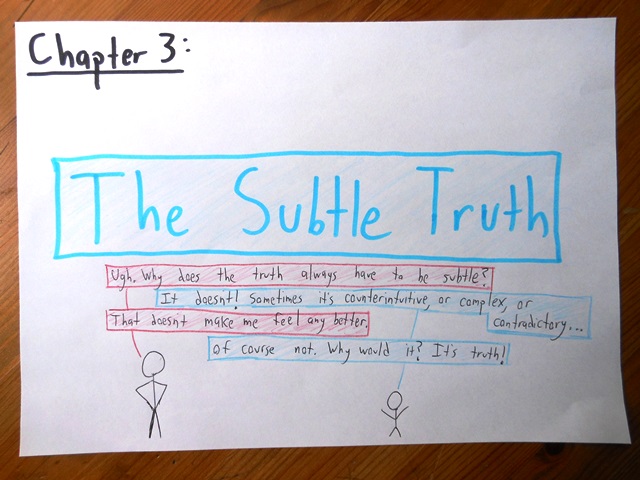

But this is where I got it wrong, and where the SAT—to their credit—got it right.

The crucial fact is that, even though it’s perfectly possible to earn a solid score (say, a 600 per section) while answering only two-thirds of the questions, virtually no students take that approach. Out of every 25 questions, the typical student answers 24.

It’s strange—the system gives them no incentive to do this. They’d largely be better off focusing on 12 or 14 questions, making sure to get them right, rather than spreading themselves thin by hurrying to finish. Even so, students answer virtually every question, leaving almost no blanks.

The SAT’s revision, then, is just a response to the facts on the ground. Kids are already answering all the questions. In this landscape, penalizing wrong answers, on top of rewarding right ones, is redundant and confusing.

And here we come to the moral, the take-home message, the thing I learned from picking a losing rhetorical battle with the College Board, and then wising up.

It’s vacuous to call a test “good in theory.” For an assessment, the only thing that matters is practice. The measure of a test’s success lies in its interactions with the humans that take it.

When designing (or pondering the design of) a behemoth test like the SAT, it’s tempting to think of it as a cold, impersonal system of incentives. It’s easy to forget that it will land on the desk not of some abstract test-taking machine but of real, flesh-and-blood students.

As such, it must obey the imperative of every test: to respond to the needs and habits of the test-takers.

A test may appear transparent, fair, and perfectly organized to an outsider, but if it confuses the students and obscures their abilities, then it’s worthless. An ingenious bit of test-craft gets you nowhere if it asks students to fight against instincts that they simply can’t overcome.

So College Board, I apologize for the hate-letter I never sent. You were right.

I think you were right the first time. The new test scoring scheme is deliberately easier to game. It is intended as a penalty for the risk-averse who would rather be silent than wrong. It is now considered a sin in the US to be risk-averse—all rewards must go to the those who gamble most.

Hmm. I think I agree with you about the broader cultural point – we Americans tend to reward bravado over thoughtful ambivalence – even though I’m not sure I see the SAT as a battleground of that particular war.

This discussion misses the fact that SAT (and many standardized tests for that matter) no longer use summed-scoring (i.e. Classical test theory). They utilize the graded item response theory. It is unnecessary to take points away for guessing/wrong answers because the model already accounts for guessing by implementing a lower asymptote (or c parameter) on the Item Characteristic Curve (ICC) (this is the curve that shows how likely a person is to answer correctly on the y and and what their standing is x / theta)

The fact that very few questions are left blank means it’s not nearly as big a deal as I had imagined, but there’s still a reasonable argument against the change.

For students who answer all the questions, the scoring change makes no mathematical difference whatsoever. The change translates and rescales their raw score a little, but it’s still a linear function of the number of right answers, and that’s all that matters. The raw score was always going to be transformed to give the final score, so applying this linear function behind the scenes is absolutely unnoticeable.

On the other hand, it makes a big difference for the few students who don’t answer all the questions. Before, they were treated fairly; now, they’re screwed (in that they get worse final scores than they would have received had they spent one minute answering A to every remaining question). The fact that there aren’t many of these students makes the issue less important to the world as a whole, but no less important to the students it affects.

The counterargument is that the “guessing penalty” confuses students. Maybe this is a bigger problem than I realize, but I doubt it confuses them much. Evidently it doesn’t confuse them into leaving lots of blanks, which would have been one plausible effect. I’d guess that most students just aren’t thinking very much about how the exam is scored as they take it (and the ones who are thinking about it probably understand the system), and that confusion over the scoring system has negligible effects on student scores.

My interpretation of the College Board’s decision is that their motivation was entirely public relations. It’s not that the guessing penalty did any harm to actual exam performance, but rather that it hurt the SAT’s reputation. It gave clueless people the incorrect impression that the SAT was scored in an inscrutable way that might somehow disadvantage them. If too many people think that, then the SAT will become less popular, and that would be bad for the College Board. (But it’s not clear that anyone outside the College Board is worse off if a student takes the ACT instead of the SAT.)

In short, the College Board made the test worse and thereby threw a small group of students under the bus, all in order to protect their lucrative racket of collecting fees. Yeah, I know they’re a non-profit, but when they pay $1.8 million a year to their president (in 2012, the latest year for which I could find their IRS form 990), he has a huge incentive to keep the money flowing.

First off, I’m with you that the non-profit status of the College Board deserves a qualifier somewhere between “dubious” and “criminal.” And I agree that this move is far more about optics than it is about substance. (The same could be said, I think, of most of the new revisions in the SAT.)

That said, if you (somewhat generously) rephrase “public relations” as “increased transparency,” it starts sounding better.

It all depends, I guess, on how those blank answers are distributed. If they’re concentrated in a few students, then yeah, you can argue they’re getting thrown under the bus. But if the distribution is more equitable (most students skip 0-2 problems, say) then it’s not so bad. And once news of the new guessing policy circulates, you might see that number of blanks drop to virtually zero, in which case, nobody’s getting screwed.

So to really make it fair, the scoring machine should just generate a random answer for every question that was left blank.

Tell me again why this is a good thing?

My father’s geometry teacher (ca. 1918) used to take off two points for errors. “Not only have you failed to get it right,” he would say, “you have also gotten it wrong.”

I love it.

I heard about a multiple-choice test where you had to assign probabilistic weights to the answers, and earned credit based on that. The scoring system was such that if you put 0% weight on the right answer, your score would theoretically be infinitely negative for the question (although I think the professor capped this at -10% per question). Even so, the average score in the class was negative.

And…What was the penalty for your weights not adding up to 100%? 🙂

You might be interested in the scoring system for the high school math contests run by the University of Waterloo:

“Scoring: Each correct answer is worth 5 in Part A, 6 in Part B, and 8 in Part C.

There is no penalty for an incorrect answer.

Each unanswered question is worth 2, to a maximum of 10 unanswered questions.”

No penalty. Also, no two points.

Sample here: http://www.cemc.uwaterloo.ca/contests/past_contests/2014/2014CayleyContest.pdf (See #7)

Interesting! I took puzzle tests like that, but without the maximum on unanswered questions. I think that maximum has a nice effect – seems to encourage you to get your hands dirty and not worry too much about screwing up, without creating an incentive for wild guesswork.

I think it is sad that a wild guess will, on average, count for more than an honest “I don’t know”. Knowing your limits is one of the most valuable insights you can gain. Admitting to them is one of the most courageous things one can do.

I’m with you. Unfortunately, a multiple-choice test like the SAT is a poor measure of many traits, including that healthy capacity for self-doubt.

“Out of every 25 questions, the typical student answers 24.

It’s strange—the system gives them no incentive to do this.”

I don’t think this is true. The system gives them no incentive to guess if they have absolutely no idea about a question, but this is (presumably; I have no specific experience of SATs but have done other exams with a similar system) rarely the case. If there is one option which you can eliminate quickly, your expectation from guessing rises from 0 to 1/16.

True. “No incentive” is poorly phrased; what I meant is that this is rarely an optimal strategy, especially for students who aren’t expecting to score above ~700 per section. They’re generally better off investing time in about 3/4 of the questions, making sure they get those right, and not bothering at all with the remainder. (To score a 600, it generally suffices to get roughly 12 right answers out of 20, provided you don’t have more than one wrong answer.)

You are working with a binary process. The implicit assumption in your article is that I either know the answer or I do not know the answer and that all my guesses are completely random.

What about situations when I know some of the options are downright wrong and some or a maybe. Then my guess – it is now an educated guess – is in with a better than 1 in 4 chance.

I am sure it is as important to test my knowledge of what is not true as testing what I know to be true. Allowing me a to make an educated guess provides for that.

So negative marking will eliminate the completely random guess but provide some benefit to people who make an educated guess.

Ignorance and some knowledge are not the same.

Agreed, morover I’d emphasize the system giving 2 points for a right answer and -1 for a wrong one, with 5 choices.

This way guessing is penalized until you eliminate 2 wrong answers, then choosing among 3 is neutral and between 2 gives a slight advantage.

The more you know, the more you get, as it should be.

Quite right – although the new policy still rewards those whose guesses are more educated, for the simple fact that they’re more likely to be right.

You’re quite right to push beyond the binary “known answer” vs. “random guess” framework I’ve set up, but I don’t think acknowledging those extra degrees of knowledge alters the final analysis of the two systems.

This whole topic should be given to all students studying statistics as for them it’s about as real world as it gets.

True. Teaching Stats, I found myself illustrating concepts by drawing upon examples from the world of testing – scores, averages, percentiles. Kind of a shame that this is the primary relationship so many kids have with data, but that being the case, might as well exploit it!

Hey, maybe I missed the explanation, but why no whiteboards? Just curious.

Yeah, good question with a weird/silly response: I moved from sunny California to cloudy England, and it’s harder to get enough sunlight to photograph the whiteboard. (Under artificial light, there’s usually a glare.)

Also, felt like I was stagnating artistically and needed a new medium to summon my muse. (Let me know if you see her, my muse. She’s been scarce for the last, oh, 27 years.)

Am I the only one who thinks it’s still preferable to guess in the penalized test example?

the expectancy for a guess is 0.25*1 – 0.75*(-0.25) = 0.25 (meaning you get a quarter point for a guess on average)

While the example question shows only four answers, I seem to recall actual SAT questions having five. That gets you the four wrong answers per right answer needed to balance out perfectly on average at a -.25 penalty.

Yeah, sorry for the misleading illustration! Although, then again, see the title of the blog…

I never took one of his courses, but I’m told that Prof. Giventhal at Berkeley offered a huge bonus (maybe 40/100 points or so) for writing nothing false on the exam. There were still the full 100 points available if you wanted to take the exam the usual way, but you could also try to get an A+ by solving a fraction of the problems perfectly. I like the idea in theory, but I imagine it caused terror in practice.